Lecture: Ethics

Actuarial Data Science - Open Learning Resource

Recommended Reading

Learning Objectives

Ethical considerations are central to modern actuarial and data science practice, especially in the context of AI and automated decision-making. In this lecture, we explore key ethical principles, their implications for insurance pricing, and how to balance fairness, regulation, and business objectives.

Understand the key principles of AI ethics, including fairness, transparency, accountability, and privacy.

Explain the role of discrimination and fairness in insurance, and distinguish between social and economic perspectives.

Evaluate different approaches to mitigating bias and discrimination, including input-based and output-based methods.

Describe common quantitative fairness criteria and discuss their applicability in actuarial contexts.

Assess trade-offs between fairness, accuracy, and business objectives in real-world applications.

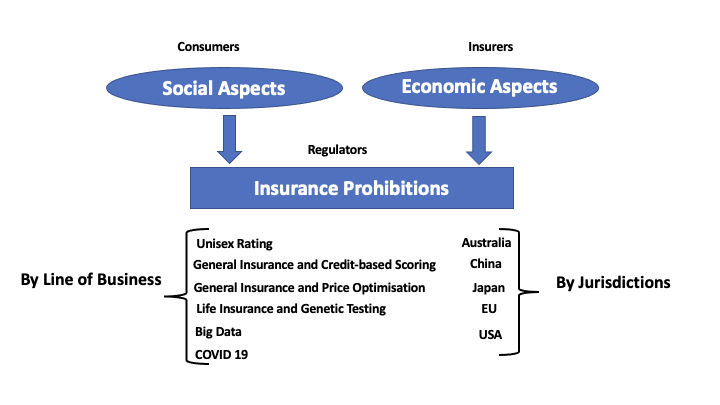

Revisiting the Data Science Lifecycle

Q: What is the most frequent failure in data analysis?

A: Problem formulation

“The most frequent failure in data analysis is mistaking the type of question being considered.”

— Leek and Peng (2015)

Major Principles of AI Ethics

Fairness and non-discrimination: Mitigate both direct and indirect discrimination embedded in data or model design.

Transparency and explainability: Ensure decisions are interpretable not only to developers, but also to customers and regulators.

Accountability: Clarify who is responsible when AI systems make errors (e.g. actuaries, developers, or firms).

Privacy and data ethics: Address issues related to third-party data, telematics, and social media sources.

Contestability: Provide individuals with meaningful channels to challenge and appeal automated decisions

Stability and robustness: Ensure AI models remain reliable and perform as expected under real-world conditions.

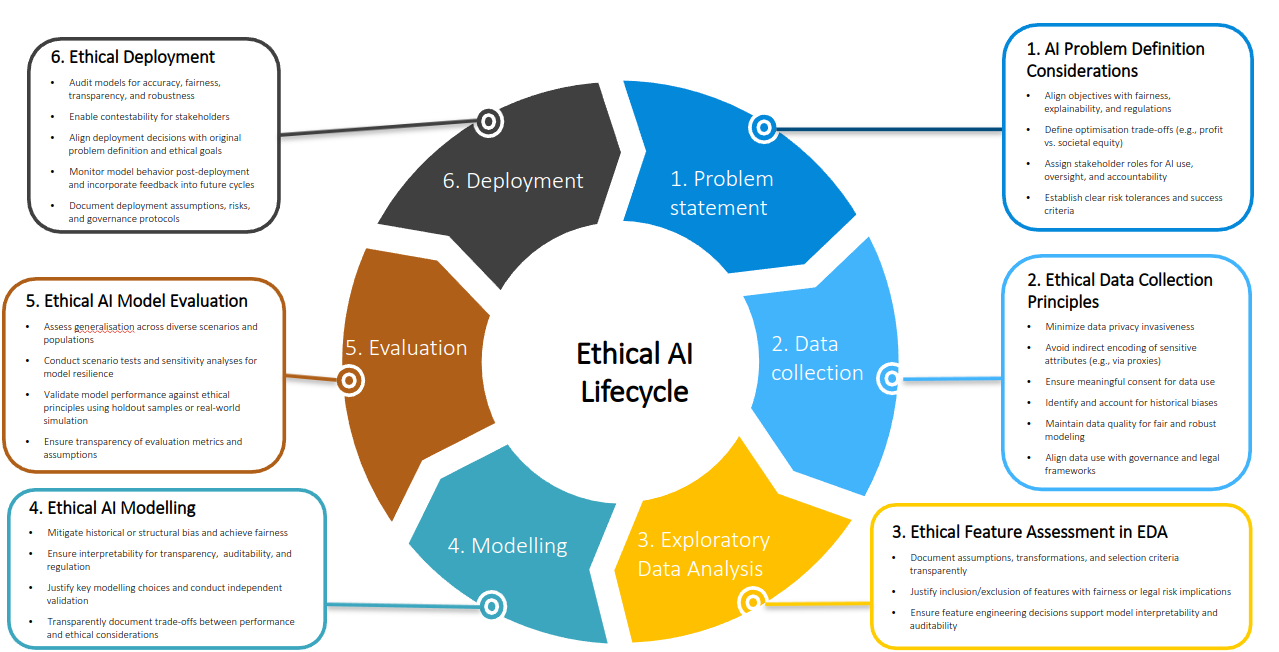

Ethical AI Lifecycle

“The actuarial profession should lead, not follow, in building public trust in AI systems.”

— The Actuary, IFoA

Regulatory Responses to AI and Discrimination in Insurance

Guidance on AI and Discrimination in Insurance (Australia)

Guidance Resource: AI and Discrimination in Insurance (AHRC)

From Bias to Fairness

How can bias related to protected attributes lead to unfair discrimination — and how can it be mitigated?

Topic 1: What are the principles to assess the appropriateness of insurance discrimination (differentiation)?

Adapted from Frees and Huang (2023), North American Actuarial Journal

Background

Discrimination is widespread in society.

Insurance pricing is interesting because the entire industry is based on discrimination.

However, certain characteristics (e.g., ethnicity, religion, heritage) are typically prohibited.

- Issuance, renewal, or cancellation

- Coverage

- Underwriting and pricing

- Marketing

- Claims processing

Fairness Depends on Context

Is insurance a social good or an economic commodity?

Is risk pooling based on subsidising solidarity or chance solidarity?

Insurance as an Economic Commodity

- Voluntary insurance shifts insurance toward an economic commodity.

- Market price depends on the forces of supply and demand

- “Free” market (limited regulation)

- Risk classification is advocated to address adverse selection, moral hazard, and improve economic efficiency

- Life insurance is often viewed as a private (non-public) product, an economic commodity.

- Voluntary auto insurance is an economic commodity.

- Long-term care and disability insurance fall in between.

Is it fair to use that rating factor?

Control: e.g., ownership of a sports car; many sensitive attributes are uncontrollable (gender, race, ethnicity, nationality, etc.)

Mutability: Does the variable change over time (such as age) or stay fixed?

Causality: It is generally acceptable to use a variable if it is known to cause an insured event (e.g., cancer in life insurance).

Statistical Discrimination: A variable must have some predictive value of the underlying risk. A necessary, but not sufficient, condition.

Limiting or Reversing the Effects of Past Discrimination. e.g., discriminating based on skin color is more problematic than based on eye color.

Inhibiting Socially Valuable Behavior: e.g., whether to participate in genetic testing research when insurers make decisions based on genetic test results.

Indirect Discrimination: A Grey Area in Regulation

Direct discrimination is prohibited, but indirect discrimination using proxies or more complex and opaque algorithms is not clearly regulated or assessed.

Do we have a clear definition of indirect discrimination?

How do we assess and mitigate indirect discrimination?

How to Mitigate Discrimination and Achieve Fairness?

- Input-based approach:

- Restricting the use of protected or proxy variables (fairness through unawareness)

- Specifying the feature importance for pricing (California regulations)

- Output-based approach:

- Define quantitative fairness criteria

- Protected attributes are not allowed (no direct discrimination)

- Protected attributes are allowed as rating factors

- Define quantitative fairness criteria

Topic 2: How to evaluate fairness and mitigate indirect discrimination and bias in the context of AI and Big Data?

Adapted from Xin and Huang (2024), North American Actuarial Journal (Best Paper Award)

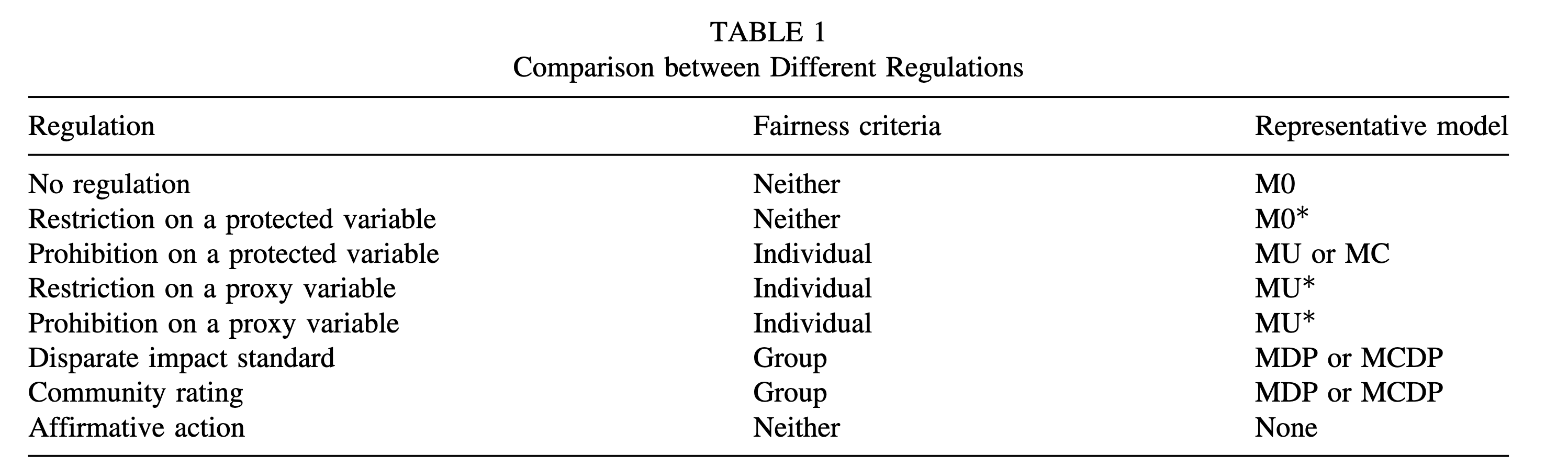

Existing Regulations

- No regulation

- Restriction on the use of a protected variable

- Prohibition on the use of a protected variable

- Restriction on the use of a proxy variable

- Prohibition on the use of a proxy variable

- Disparate impact standard

- Community rating

- Affirmative action

Quantitative Fairness Criteria

- Association-based

- Individual fairness

- Group fairness

- Independence

- Separation

- Sufficiency (calibration)

- Causality-based

- Counterfactual fairness

- Are they suitable for the insurance context?

- Are they compatible with each other?

- Which one should be used?

Further reading: Lindholm et al. (2022);Baumann and Loi (2023); Araiza Iturria, Hardy, and Marriott (2024); Charpentier (2024); Lindholm et al. (2024); Xin and Huang (2024); Côté, Côté, and Charpentier (2025); CAS Research Paper Series; SOA Research Paper Series

Fairness Criteria for Insurance Pricing

Let X_P denote the protected attribute, which is a categorical variable and has only two groups X_P = \{a, b\}.

Let X_{NP} denote other available (non-protected) attributes, and hence the feature space is X = \{X_P, X_{NP}\}.

Let \hat{Y} denote the predictor or the decision outcome of interest, \hat{Y} \in \mathbb{R}. In our context, \hat{Y} is the premium charged by the insurer, and in this paper, we assume that \hat{Y} is approximately equal to the pure premium and ignore any expenses or profit loadings.

Let Y denote the observed outcome of interest, Y \in \mathbb{R}. Note that Y is not known when the policy is issued, Y is a measure of real claim experience observed by the insurer over a given period after policy issuance.

Fairness Criteria – Individual Fairness

Definition 1. Fairness through Unawareness (FTU): Fairness is achieved if the protected attribute X_P is not explicitly used in calculating the insurance premium \hat{Y}.

Definition 2. Fairness through Awareness (FTA): A predictor \hat{Y} satisfies fairness through awareness if it gives similar predictions to similar individuals (Dwork et al. 2012; Kusner et al. 2017).

Definition 3. Counterfactual Fairness (CF): A predictor \hat{Y} is counterfactually fair for an individual if “its prediction in the real world is the same as that in the counterfactual world where the individual had belonged to a different demographic group” (Kusner et al. 2017; Wu, Zhang, and Wu 2019) or, mathematically, given X = x and X_P = a, for all y and for simplicity, X_P has only two groups \{a, b\}, a predictor \hat{Y} is counterfactually fair if

\mathbb{P}(\hat{Y}_{X_P \leftarrow b}(U) = y \mid X_{NP} = x, X_P = b) = \mathbb{P}(\hat{Y}_{X_P \leftarrow a}(U) = y \mid X_{NP} = x, X_P = b).

Fairness Criteria – Individual Fairness (continued)

Definition 4. Controlling for the Protected Variable (CPV): As defined in definition 6 in Lindholm et al. (2022), a discrimination-free price for Y with respect to X_{NP} is defined by

h^*(X_{NP}) := \int_{x_P} \mathbb{E}[Y \mid X_{NP}, x_P] d\mathbb{P}^*(x_P),

where \mathbb{P}^*(x_P) is defined on the same range as \mathbb{P}(x_P).

Fairness Criteria – Group Fairness

Definition 5. Demographic Parity (DP): A predictor \hat{Y} satisfies demographic parity if

\mathbb{P}(\hat{Y} \mid X_P = a) = \mathbb{P}(\hat{Y} \mid X_P = b).

Definition 6. Relaxed Demographic Parity (RDP): A predictor \hat{Y} satisfies relaxed demographic parity or has no disparate impact if the following ratio is above certain threshold \tau (Feldman et al. 2015):

\frac{\mathbb{P}(\hat{Y} = \hat{y} \mid X_P = b)}{\mathbb{P}(\hat{Y} = \hat{y} \mid X_P = a)} > \tau.

Fairness Criteria – Group Fairness (continued)

Definition 7. Conditional Demographic Parity (CDP): A predictor \hat{Y} satisfies conditional demographic parity if

\mathbb{P}(\hat{Y} \mid X_{NP_{\text{legit}}} = x_{NP_{\text{legit}}}, X_P = a) = \mathbb{P}(\hat{Y} \mid X_{NP_{\text{legit}}} = x_{NP_{\text{legit}}}, X_P = b),

where X_{NP_{\text{legit}}} denotes a subset of “legitimate” attributes within unprotected attributes in the feature space (X_{NP_{\text{legit}}} \subseteq X_{NP} \subset X) that are permitted to affect the outcome of interest (Corbett-Davies et al. 2017; Verma and Rubin 2018).

Regulations, Fairness Criteria, and Models

Questions to Tackle

- Proxy discrimination (omitted variable bias)

- Conditional demographic parity

- Equalized odds (error rate parity)

- Well-calibration

- Fairness in cost prediction or market pricing?

- Fairness in terms of prices or markups?

- What if protected variables are unobserved?

- Consumer welfare vs. firm profit

- Welfare across different groups (e.g., males vs. females)